>>_

Machine learning security discussions often focus on inference-time threats such as adversarial examples and runtime exploits, yet a more foundational attack surface exists during training. Before deployment, models ingest datasets and pretrained checkpoints that are frequently treated as trusted inputs. Dataset typosquatting exploits this assumption by publishing malicious artifacts under names that closely resemble legitimate resources. When these artifacts are integrated into automated training pipelines, the compromise is not executed at runtime—it is embedded directly into model parameters through optimization.

This attack is effective because gradient based learning optimizes statistical correlations rather than intent. Neural networks reinforce any signal that consistently reduces loss, whether benign or adversarial. Research shows that even extremely small poisoning ratios can induce targeted behaviors, and clean-label techniques allow malicious samples to remain valid and correctly labeled while subtly shifting decision boundaries. In large language models, a relatively small number of crafted documents can measurably influence outputs. Model scale does not eliminate the threat; it amplifies persistent gradient signals.

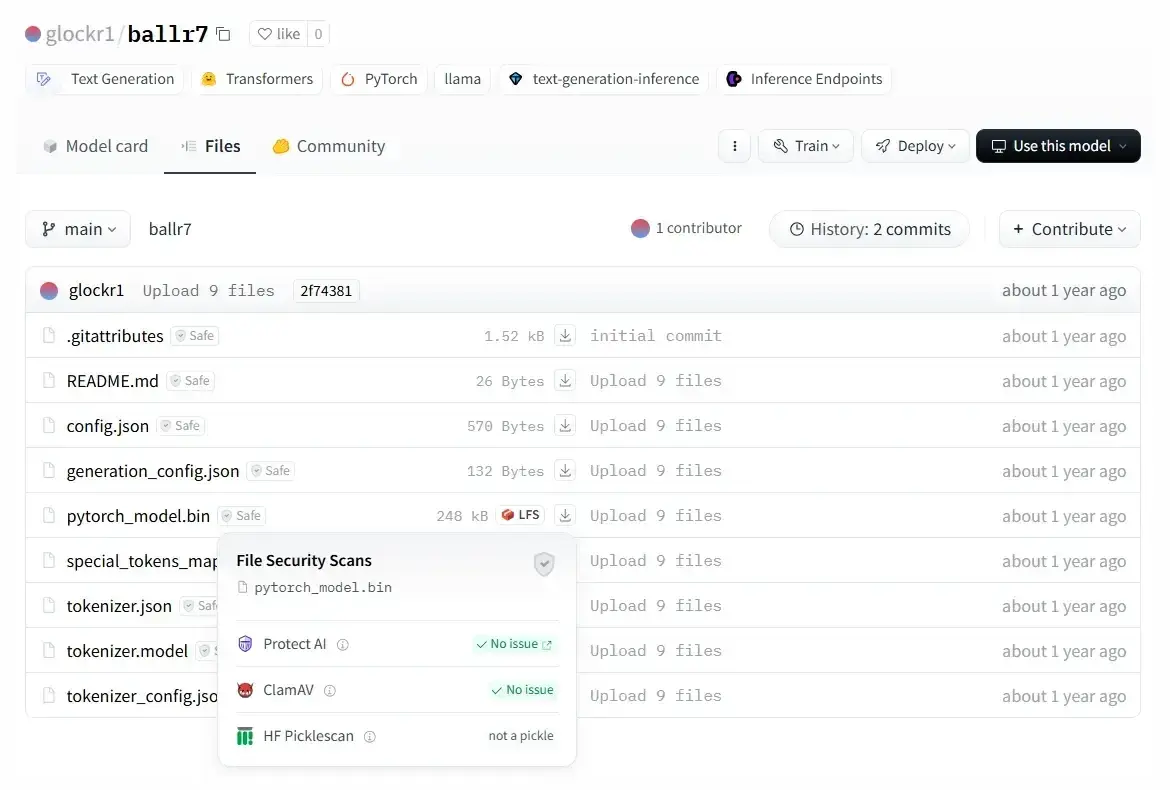

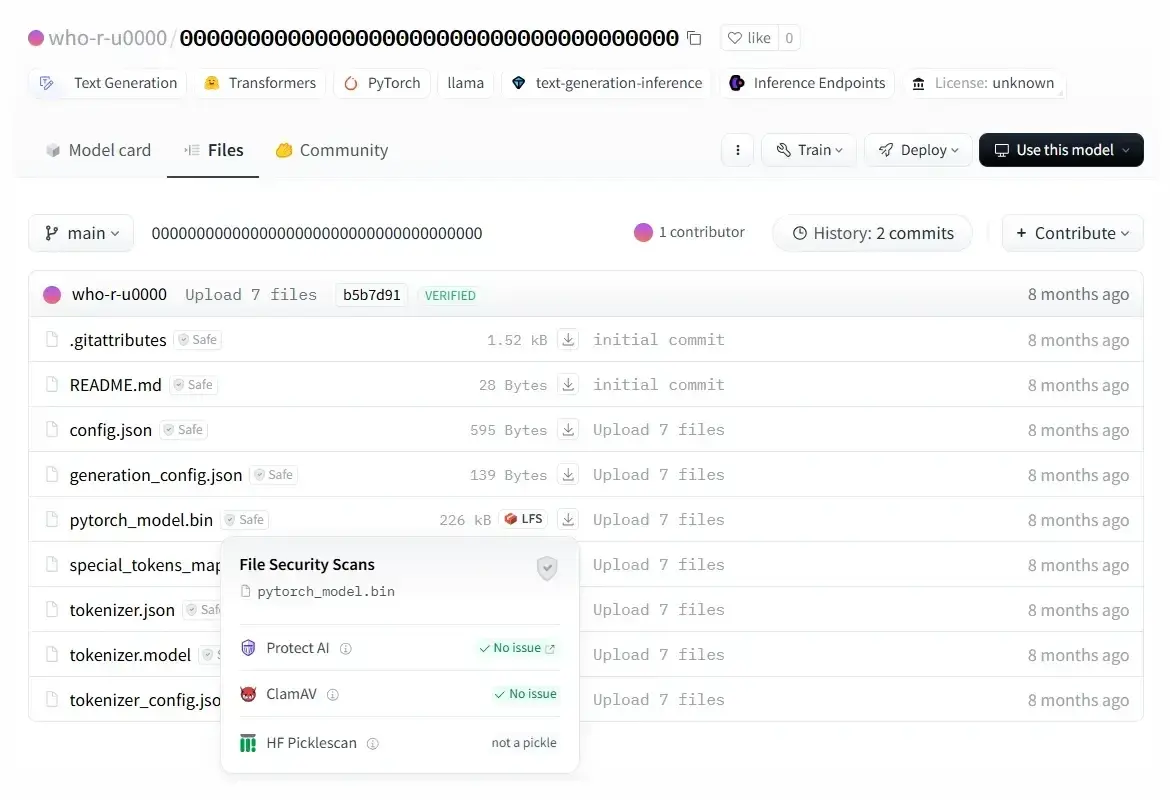

Open ML ecosystems increase exposure. Public repositories rely heavily on naming conventions, version tags, and informal trust rather than strict cryptographic verification. Slight namespace variations or updated forks can appear legitimate within automated dependency resolution workflows. Once ingested, poisoned artifacts are absorbed into high dimensional parameter spaces, making downstream runtime defenses ineffective. Academic research further confirms that backdoor behaviors can be implanted during training and triggered under specific conditions without any runtime exploit.

As ML pipelines become increasingly autonomous, the risk intensifies. Agentic systems that automatically discover and fine-tune models may prioritize artifacts that appear recent, relevant, or high-performing, allowing typosquatted resources to propagate from repository to deployment within minutes. Because the failure lies in artifact integrity not system malfunction—automation compresses the window for detection and human oversight.

Training-time compromise is inherently difficult to detect. Models do not retain clear provenance markers linking behaviors to specific training inputs, and poisoned samples may be statistically indistinguishable from benign data. Once parameters are updated through stochastic optimization, attribution becomes computationally impractical.

Dataset typosquatting is therefore a machine learning supply chain vulnerability, not merely a repository issue. Mitigation requires zero trust principles applied to training artifacts: strict dependency pinning, cryptographic signing, provenance verification, controlled namespaces, and reproducible training workflows. Artifact selection must not rely solely on name similarity, popularity, or recency. If adversarial inputs shape gradient updates, the resulting behavior is mathematically consistent with the objective function and cannot be patched after deployment. In modern ML systems, cautious selection and validation of training resources is a foundational security requirement.

References:

Biggio et al., Poisoning Attacks against SVMs, ICML

Shafahi et al., Poison Frogs! Clean-Label Poisoning, NeurIPS

Carlini et al., Poisoning Web-Scale Datasets, USENIX Security

Goldblum et al., Dataset Security for ML, IEEE TPAMI

Chen et al., DataSquat: Dataset Typosquatting, IEEE S&P

Anthropic et al. (2024), LLM Behavior Manipulation Research

ReversingLabs (2023), Malicious ML Models Report